Previous articles

Web Scraping for Fun and Profit: A Primer

Web Scraping for Fun and Profit: Part 1

Web Scraping for Fun and Profit: Part 2

Web Scraping for Fun and Profit: Part 3

Web Scraping for Fun and Profit: Part 4

Web Scraping for Fun and Profit: Part 5

Web Scraping for Fun and Profit: Part 6

Web Scraping for Fun and Profit: Part 7

Recap

In Part 1 we installed Python, pip, and the Requests library. We set up a basic program which fetches the content of this site. In Part 2, we installed BeautifulSoup and used it to parse the page in order to extract the data we care about. In Part 3, we installed MongoDB and the pymongo library, and used them to determine if the data fetched is new. In Part 4, we added a basic function to format an extracted post for SMS delivery and used Twilio to send this SMS. In Part 5, we added a few command-line options to help us in future development as we add support for new sites. In Part 6, we went over basic error handling and how to automate this script. In Part 7, we did some refactoring to make it simpler to add new requests.

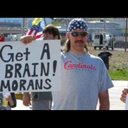

In this part, we are going to add a request for fetching tweets from the realDonaldTrump Twitter account. If you only cared about checking twitter for updates, you would probably just want to use the Twitter APIs, but it’s hard to find sites with acceptable Terms and Conditions and robots.txt for scraping, and Twitter fits the bill as of the time of this posting. And besides, you can get the latest Trump tweets before they’re disseminated all over the interwebs!

Support multiple requests

Let’s start by updating scraper_requests.py and scraper.py to handle multiple requests.

# scraper_requests.py

# DELETE

def get_request():

# REPLACE WITH

def get_requests():

# DELETE

request = {

# REPLACE WITH

requests = []

requests.append({

...

# DELETE

}

# REPLACE WITH

})# scraper.py

# DELETE

request = scraper_requests.get_request()

# REPLACE WITH

requests = scraper_requests.get_requests()

# ADD

for request in requests:

# >> SHIFT OVER THE REST OF THE PROCESSING CODETest it out in dryrun mode to verify that we didn’t break anything.

$ python scraper.py --verbose --dryrun

Connecting to Twilio

Requesting page: http://andythemoron.com

Parsing response with BeautifulSoup

Extracting data from response

Extracted 9 items

...Choose your index

To check for new blog posts, we’ve been keying on the URLs of the articles. Tweets, however, do not have a URL, although they are associated with a unique identifier. Let’s update our code to return a tuple of the index to key on and the extracted data instead of just the extracted data.

# andy_the_moron_parser.py

# DELETE

return extracted

# REPLACE WITH

return ('link', extracted)# scraper.py

# DELETE

extracted = request['extract_method'](soup)

# REPLACE WITH

(index, extracted) = request['extract_method'](soup)

# DELETE

scraper_utils.process_extracted(

twilio_client, extracted, request['collection'], from_number, to_number,

# REPLACE WITH

scraper_utils.process_extracted(

twilio_client, index, extracted, request['collection'], from_number, to_number,# scraper_utils.py

# DELETE

def process_extracted(

twilio_client, extracted, collection, from_number, to_number, format_method, seed_db, dry_run, verbose):

# REPLACE WITH

def process_extracted(

twilio_client, index, extracted, collection, from_number, to_number, format_method, seed_db, dry_run, verbose):

# DELETE

if collection.find_one({"link": item["link"]}) is None:

# REPLACE WITH

if collection.find_one({index: item[index]}) is None:Let’s verify that everything still works…

$ python scraper.py --verbose --dryrun

Connecting to Twilio

Requesting page: http://andythemoron.com

Parsing response with BeautifulSoup

Extracting data from response

Extracted 9 items

...Add twitter parsing

Let’s start by adding the request for Donald Trump’s tweets.

# scraper_requests.py

# ADD

requests.append({

'base_url' : 'http://twitter.com/realdonaldtrump',

'extract_method' : twitter_parser.extract_tweets,

'format_method' : twitter_parser.format_tweet,

'collection' : db_client.my_db.my_tweets

})Now let’s add the twitter-specific parsing and formatting methods. Using your browser development tools (see Part 2) you’ll notice that tweets are “li” elements wrapping a “div” element with the following classes: tweet, js-stream-tweet, js-actionable-tweet, js-profile-popup-actionable, original-tweet, js-original-tweet, has-cards, and has-content. In writing a parsing method, I initially tried extracting the “div” elements of class “tweet”, but it seems to be used for one additional element that is not a visible “tweet”. “js-stream-tweet” seems to fit the bill for the tweets we’ll want to scrape. We can fetch them like so: “soup.find_all(‘div’, class_=’js-stream-tweet’)”.

These div elements of class “js-stream-tweet” contain metadata we might want, such as the unique tweet id (data-tweet-id), the tweeter’s twitter handle (data-screen-name), and the retweeter’s twitter handle, if applicable (data-retweeter).

You’ll notice that the actual content of the tweet itself is contained inside a p element of class “js-tweet-text”, among others, inside a div element of class “js-tweet-text-container” inside a div element of class “content”. Given a “tweet” that is one of the “js-stream-tweet” divs we extracted earlier, we can fetch the tweet’s text like this: “tweet_text = tweet.find(‘div’, class_=’js-tweet-text-container’).find(‘p’, class_=’js-tweet-text’).text”.

Let’s create a new file twitter_parser.py to parse and format tweet data.

# twitter_parser.py

def extract_tweets(soup):

extracted = []

tweets = soup.find_all("div", class_="js-stream-tweet")

for tweet in tweets:

tweet_text = tweet.find("div", class_="js-tweet-text-container").find("p", class_="js-tweet-text").text

# twitter pic links aren't always separated from the content; add a space so we can click on them

tweet_pic_link_loc = tweet_text.find("pic.twitter.com")

if tweet_pic_link_loc != -1:

tweet_text = tweet_text[:tweet_pic_link_loc] + " " + tweet_text[tweet_pic_link_loc:]

tweet_id = tweet.get("data-tweet-id")

tweeter = tweet.get("data-screen-name")

retweeter = tweet.get("data-retweeter")

extracted.append({

'id' : tweet_id,

'tweeter' : tweeter,

'retweeter' : retweeter,

'text' : tweet_text

})

return ('id', extracted)

def format_tweet(tweet):

if tweet['retweeter'] is not None:

formatted_tweet = "New retweet from " + tweet['retweeter'] + " of " + tweet['tweeter']

else:

formatted_tweet = "New tweet from " + tweet['tweeter']

formatted_tweet += ": " + tweet['text'] + "\n"

return formatted_tweetLet’s check it out!

$ python scraper.py --verbose --dryrun

Connecting to Twilio

Requesting page: http://andythemoron.com

Parsing response with BeautifulSoup

Extracting data from response

Extracted 9 items

Requesting page: http://twitter.com/realdonaldtrump

Parsing response with BeautifulSoup

Extracting data from response

Extracted 20 items

Found a new item: {'id': '851247294854856706', 'tweeter': 'realDonaldTrump', 'retweeter': None, 'text': 'Thank you @USNavy! #USAhttps://twitter.com/usnavy/status/851227376826634243\xa0…'}

Found a new item: {'id': '851092618754891781', 'tweeter': 'realDonaldTrump', 'retweeter': None, 'text': '...confidence that President Al Sisi will handle situation properly.'}

Found a new item: {'id': '851092500056072198', 'tweeter': 'realDonaldTrump', 'retweeter': None, 'text': 'So sad to hear of the terrorist attack in Egypt. U.S. strongly condemns. I have great...'}

Found a new item: {'id': '850800045012201473', 'tweeter': 'realDonaldTrump', 'retweeter': None, 'text': 'Judge Gorsuch will be sworn in at the Rose Garden of the White House on Monday at 11:00 A.M. He will be a great Justice. Very proud of him!'}

Found a new item: {'id': '850785347038576640', 'tweeter': 'realDonaldTrump', 'retweeter': None, 'text': "The reason you don't generally hit runways is that they are easy and inexpensive to quickly fix (fill in and top)!"}

Found a new item: {'id': '850723509370327040', 'tweeter': 'realDonaldTrump', 'retweeter': None, 'text': 'Congratulations to our great military men and women for representing the United States, and the world, so well in the Syria attack.'}

Found a new item: {'id': '850722694958075905', 'tweeter': 'realDonaldTrump', 'retweeter': None, 'text': '...goodwill and friendship was formed, but only time will tell on trade.'}

Found a new item: {'id': '850722648883638272', 'tweeter': 'realDonaldTrump', 'retweeter': None, 'text': 'It was a great honor to have President Xi Jinping and Madame Peng Liyuan of China as our guests in the United States. Tremendous...'}

Found a new item: {'id': '850488492828360704', 'tweeter': 'IvankaTrump', 'retweeter': 'realDonaldTrump', 'text': "Very proud of Arabella and Joseph for their performance in honor of President Xi Jinping and Madame Peng Liyuan's official visit to the US! pic.twitter.com/fu3RIh26UO"}

Found a new item: {'id': '850053194923298816', 'tweeter': 'realDonaldTrump', 'retweeter': None, 'text': 'It was an honor to host our American heroes from the @WWP #SoldierRideDC at the @WhiteHouse today with @FLOTUS, @VP and @SecondLady. #USA pic.twitter.com/puVTKwUfRp'}

Found a new item: {'id': '849807049118625792', 'tweeter': 'realDonaldTrump', 'retweeter': None, 'text': 'JOBS, JOBS, JOBS!\nhttps://instagram.com/p/BShtQnLgquy/\xa0 pic.twitter.com/B5Qbn6llzE'}

Found a new item: {'id': '849732911377117185', 'tweeter': 'realDonaldTrump', 'retweeter': None, 'text': "I am deeply committed to preserving our strong relationship & to strengthening America's long-standing support for Jordan. @KingAbdullahII. pic.twitter.com/ogzxuZ7kla"}

Found a new item: {'id': '849366930133839872', 'tweeter': 'realDonaldTrump', 'retweeter': None, 'text': 'Great to talk jobs with #NABTU2017. Tremendous spirit & optimism - we will deliver!http://thehill.com/policy/transportation/327248-trump-promises-to-rebuild-country-in-speech-to-construction-workers\xa0…'}

Found a new item: {'id': '849345573509574665', 'tweeter': 'realDonaldTrump', 'retweeter': None, 'text': 'Thank you Sean McGarvey & the entire Governing Board of Presidents for honoring me w/an invite to speak. #NABTU2017 http://45.WH.Gov/pqxHvm\xa0 pic.twitter.com/j0CRhiyvDu'}

Found a new item: {'id': '849330281412792320', 'tweeter': 'realDonaldTrump', 'retweeter': None, 'text': '.@WhiteHouse #CEOTownHall\nhttp://45.WH.Gov/iQXunx\xa0 pic.twitter.com/XHfQ6zmF2H'}

Found a new item: {'id': '849238202992836608', 'tweeter': 'DRUDGE_REPORT', 'retweeter': 'realDonaldTrump', 'text': 'RICE ORDERED SPY DOCS ON TRUMP? http://drudge.tw/2oUA1uA\xa0'}

Found a new item: {'id': '848983209580822529', 'tweeter': 'realDonaldTrump', 'retweeter': None, 'text': 'It was an honor to welcome President Al Sisi of Egypt to the @WhiteHouse as we renew the historic partnership between the U.S. and Egypt. pic.twitter.com/HE0ryjEFb6'}

Found a new item: {'id': '848957498040217603', 'tweeter': 'realDonaldTrump', 'retweeter': None, 'text': 'Looking forward to hosting our heroes from the Wounded Warrior Project (@WWP) Soldier Ride to the @WhiteHouse on Thursday! pic.twitter.com/CkN50HPgeo'}

Found a new item: {'id': '848928217138384896', 'tweeter': 'realDonaldTrump', 'retweeter': None, 'text': 'Getting ready to meet President al-Sisi of Egypt. On behalf of the United States, I look forward to a longand wonderful relationship.'}

Found a new item: {'id': '848880519458717698', 'tweeter': 'realDonaldTrump', 'retweeter': None, 'text': '.@FoxNews from multiple sources: "There was electronic surveillance of Trump, and people close to Trump. This is unprecedented." @FBI'}Awesome! After the groundwork we’ve laid, it wasn’t too much of a pain to add the parsing of twitter for new Donald Trump tweets. From here, you can try adding your own requests and parsers, and finding all sort of applications for this tool!

Previous articles

Web Scraping for Fun and Profit: A Primer

Web Scraping for Fun and Profit: Part 1

Web Scraping for Fun and Profit: Part 2

Web Scraping for Fun and Profit: Part 3

Web Scraping for Fun and Profit: Part 4

Web Scraping for Fun and Profit: Part 5

Web Scraping for Fun and Profit: Part 6

Web Scraping for Fun and Profit: Part 7